SparkToro’s zero-click study reports that, in the United States, only three hundred sixty out of one thousand Google searches result in an open-web click. That shift makes your on-site AI search accuracy a business-critical capability, not a feature. If users cannot find information quickly inside long videos, they will abandon the task and your team loses trust.

This article breaks down the exact process to enhance accuracy in AI-powered video search, from data prerequisites to measurement and GEO-ready answer retrieval. If your use case is finding a precise visual moment, start with video frame search to ground your requirements in real retrieval behavior.

The essentials in 30 seconds

Define “accurate” per use case: frame match, scene match, or answerable passage, then design to that target.

Fix data and metadata first; embeddings cannot compensate for missing fields, duplicates, and inconsistent language.

Use hybrid retrieval plus reranking to reduce false positives without sacrificing recall.

Measure offline and online, then close the loop with logs, labeling, and controlled reindexing.

Before you tune models, you need a shared definition of “correct.”

Prerequisites that make AI search accuracy achievable

Relevance targets, corpus boundaries, and production constraints

Accuracy starts with explicit relevance objectives: “best matching frame,” “best matching shot,” “best matching clip,” or “best answerable moment with evidence.” These goals change your index design, your boosts, and your reranking budget. For commerce-like catalogs, Baymard’s search benchmark found that forty-one percent of sites fail to support key query types, a reminder that relevance failures are often basic, not exotic.

Lock down what is searchable: transcripts, OCR, captions, shot boundaries, keyframes, tags, rights, and access control lists. Make “who can see what” a first-class filter, aligned with security policies and partner obligations. Keep latency budgets explicit, because “more AI” can be limited by cost per query and throughput.

Ground truth, real queries, and an operational checklist

- Data quality: missing titles, empty fields, inconsistent units, and broken timecodes

- Compliance: GDPR retention rules for logs and labels

- Logs: zero-results, reformulations, and click or play-through signals

- Monitoring: drift, index freshness, and error rates per content source

- Governance: who can label, who can ship, and who can roll back

Define accuracy per video task, not as a generic score.

Treat permissions and provenance as ranking inputs, not afterthoughts.

Ship only what you can observe and debug in production.

Once prerequisites are clear, you can safely attack the biggest accuracy killer: messy inputs.

Clean data and metadata to stop garbage-in ranking

Normalization, deduplication, enrichment, and chunking

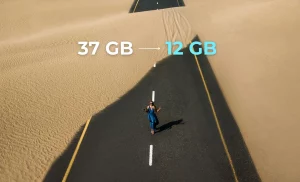

Normalize text and metadata so models see consistent meaning: encoding, casing, punctuation, and multilingual fields. Standardize units and abbreviations, especially for camera specs, aspect ratios, or product attributes tied to video content. Deduplicate at multiple levels: identical videos, near-duplicate exports, alternate cuts, and canonical versions.

Enrich entities that users actually search: people, brands, locations, and recurring objects. Add domain synonyms and stopwords that reflect your library, not generic search. For vector retrieval, chunk by shot, scene, or caption segment, and always attach a title-like label so rerankers have anchors. Good chunking reduces irrelevant matches and improves satisfaction because results feel intentional, not accidental.

Clean data is necessary, but users still search in unpredictable ways.

Structure queries around intent, not just keywords

Intent segmentation, rewriting, and log instrumentation

Map intent into navigation, informational, and transactional patterns, even for video. “Find the clip” is navigation; “what happens when” is informational; “download the segment” is transactional. Short queries, acronyms, and typos are common, so add rewriting and expansion tuned to your content language and naming conventions.

Snippet (query rewriting in practice): If a user searches “BTS intro”, rewrite to “behind the scenes introduction” and add an implicit filter like content type: extras. If they paste an internal ID like 4c04-b822-cca6ae8026f0, route to an exact-match resolver before semantic retrieval.

Instrument behavior: track reformulations, pogo-sticking between results, and “play then skip” interactions as negative feedback. Use suggestions based on successful sessions, not on popularity alone, to protect relevance for niche libraries.

Rewriting should add context and constraints, not just synonyms.

Logs are training data; treat them as a product surface.

Intent-aware routing prevents expensive semantic calls when an exact match exists.

With intent handled, you can make embeddings work for video instead of fighting your schema.

Deploy embeddings that match your domain and retrieval shape

Model choice, vector index tuning, and field alignment

Choose embeddings based on modality coverage and freshness needs: transcripts-only, multimodal, or frame-level embeddings. In video search, align fields by purpose: titles for precision, descriptions for recall, and structured attributes for filtering and boosts. If you mix modalities, keep provenance tags so you can debug why a match happened.

Flow: Ingest video → extract keyframes and transcripts → chunk by shot/segment → generate embeddings → build HNSW index → retrieve candidates → apply filters (rights, access) → rerank → return passages or frames

Use contextual matching to reduce ambiguity: entity linking, time constraints, and disambiguation rules. This is where natural language processing and language processing add practical value, because they turn “it” and “that scene” into resolvable references.

Embeddings alone rarely hit production-grade accuracy, so you need a robust hybrid stack.

Build a hybrid search system that stays accurate under pressure

Lexical plus semantic fusion, reranking, and compliance filters

Hybrid retrieval fuses lexical matches (for exact terms, names, SKUs, and timestamps) with semantic matches (for paraphrases and concept search). Add a cross-encoder reranker on the top candidate set, within your latency budget, to cut false positives while preserving recall. Then apply hard constraints: stock status for ecommerce, rights windows for video licensing, and access entitlements.

Diversity matters in video: avoid returning five near-identical cuts of the same moment. Apply near-duplicate detection, then enforce result variety across episodes, angles, or sources. Cold-start content needs controlled popularity signals so new uploads can surface without drowning relevant evergreen clips.

Now you need proof, not gut feel, that accuracy is improving.

Measure AI search accuracy with offline and online evidence

Test sets, metrics, and human evaluation

Build a test set from real queries, then oversample critical journeys and long-tail phrasing. In retail benchmarks, the Prefixbox benchmark report lists a five point four percent search conversion rate for the first half of twenty twenty-four, which you can use as a directional comparator for “search users are high intent,” even if your video product optimizes for watch completion instead of purchase.

| Metric | What it rewards | Best for video search |

|---|---|---|

| Precision@k | Top results are correct | Frame or clip “first hit” tasks |

| Recall@k | Correct results are included | Investigations and compliance review |

| MRR | First relevant result appears early | Editors and researchers under time pressure |

| NDCG | Graded relevance and ranking quality | Mixed lists: exact frames plus related scenes |

Calibrate human judgments with clear guidelines and adjudication. Online, run A/B tests with guardrails: failed searches, time-to-first-play, and downstream engagement.

Answer engines changed what “good retrieval” looks like, so accuracy must be GEO-ready.

Optimize accuracy for GEO in twenty twenty-six

Answer-first retrieval, verifiability, and anti-hallucination constraints

In a zero-click world, your search needs to return answerable passages and the evidence that supports them. The SparkToro study also highlights how often queries end without an external click, which raises the bar for on-page answers and internal search summaries.

Use passage retrieval: return the best segment, plus a confidence indicator and the source span. Add refusal behavior when evidence is missing, and keep summaries controlled and attributable. Structured outputs help: key attributes, timestamps, and short “why this result” explanations that reduce user frustration and improve understanding.

Optimize for “answer plus evidence,” not just “similarity.”

Refusals are a feature when trust matters.

Drift monitoring is part of accuracy, not a separate project.

The final step is turning all of this into a repeatable release process.

Validate results and keep accuracy stable in production

Go/no-go checks, monitoring, and an error-to-fix matrix

Validate against a fixed set of critical queries and expected top results. Monitor ninety-fifth percentile latency, zero-result rates, and click or play-through. If you serve multiple businesses or teams, segment dashboards by corpus, permissions, and query class so you can contact the right owner fast.

Flow: Collect logs → sample failures → label with guidelines → tune retrieval and reranking → reindex → ship behind a flag → monitor drift → repeat

| Symptom | Likely cause | Fix |

|---|---|---|

| High false positives | Over-broad chunks, weak filters | Tighten chunk boundaries, apply hard constraints earlier |

| Low recall on niche queries | Missing synonyms, sparse metadata | Add domain synonyms, enrich entities, backfill key fields |

| Good offline scores, poor user experience | Mismatch between labels and real intent | Refresh labels from logs, add intent routing, update guidelines |

| Performance degrades over time | Data drift, model drift | Scheduled reindexing, drift alerts, controlled model updates |

Accurate search is a product: the process, not the model, is what keeps outcomes enhanced as your library grows.

FAQ on AI search accuracy

Many teams underestimate how basic failures drive abandonment; Baymard’s benchmark is a useful reminder that query handling is often the hidden bottleneck.

How do you handle very short queries without tanking relevance?

Route short queries through intent detection first. If the query looks like a name, ID, or acronym, prefer lexical and exact match. If it is ambiguous, expand with curated synonyms and add lightweight clarifying facets. Then rerank with a cross-encoder on a small candidate set to keep latency under control.

Why choose hybrid search instead of purely semantic retrieval?

Hybrid search protects precision for exact terms, proper nouns, and timestamps, while semantic retrieval covers paraphrases. In video, users often mix both in one query. Hybrid also improves debuggability: you can see which signal won and adjust boosts, filters, and fields instead of guessing.

How can you reduce false positives without losing recall?

Start by improving chunking and adding hard constraints early: rights, access, language, and content type. Then add reranking with a model trained on your negatives. Finally, enforce diversity and near-duplicate removal so a single noisy cluster does not dominate the top results.

How much user signal do you need for meaningful learning?

You need enough volume to separate noise from intent. Prioritize high-signal events: result selection, watch duration, saves, and downstream actions. Use these interactions to build query-to-result pairs and to generate hard negatives. Combine them with small, high-quality human labels for calibration.

What is the biggest risk to accuracy over time?

Silent drift. New content formats, changed metadata conventions, and shifting user preferences slowly break relevance. Prevent it with drift dashboards, scheduled reindexing, and periodic audits of queries with rising reformulations. Treat monitoring as part of the release cycle, not an afterthought.

You improve AI search accuracy by treating it as an end-to-end system: clean inputs, intent-aware query handling, embeddings aligned to your schema, hybrid retrieval with reranking, and disciplined measurement. If your goal is fast, trustworthy retrieval inside video, focus on answerable segments and frame-level evidence, then iterate with logs and labels. That is how you turn search into a reliable experience that drives engagement and long-term satisfaction across teams and partners.